Estelle Kim

Graphics / Software Engineer, CS + CG @ UPenn

I build interactive experiences that ship to production, including real-time graphics, full-stack engineering, physical computing, and ML pipelines. I love collaborating with designers to make ambitious ideas actually work at scale.

Currently seeking summer 2026 internships!

production experience

see all →

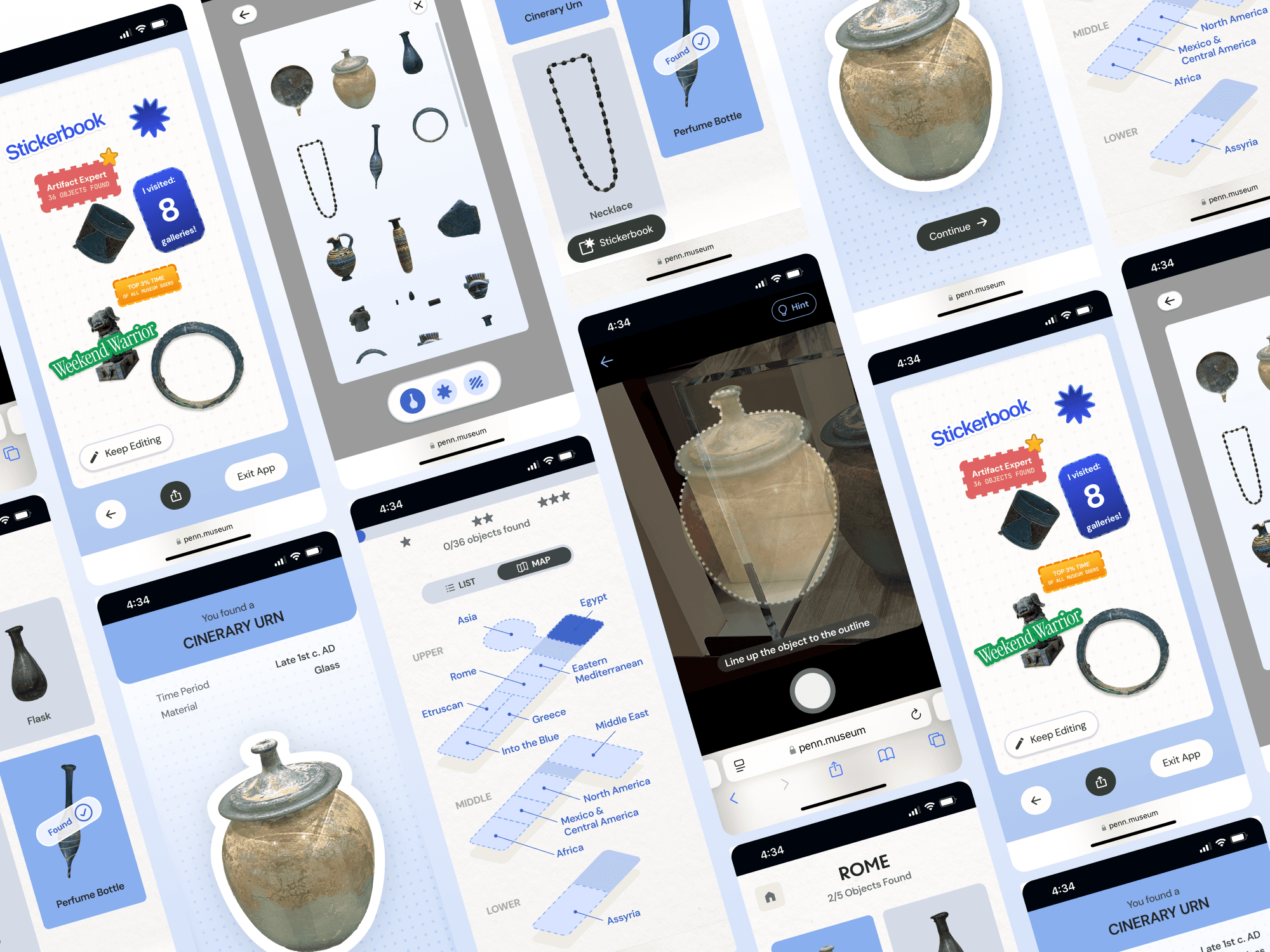

Deployed for 9+ months to 180k visitors. IndexedDB storage pipeline + artifact cutout feature with SVG masking and even-odd clipping for complex paths. Coordinated with team of 8 across design, dev, and museum stakeholders.

Worked independently with 9+ internal teams to design + optimize cross-platform data pipelines and visualizations.

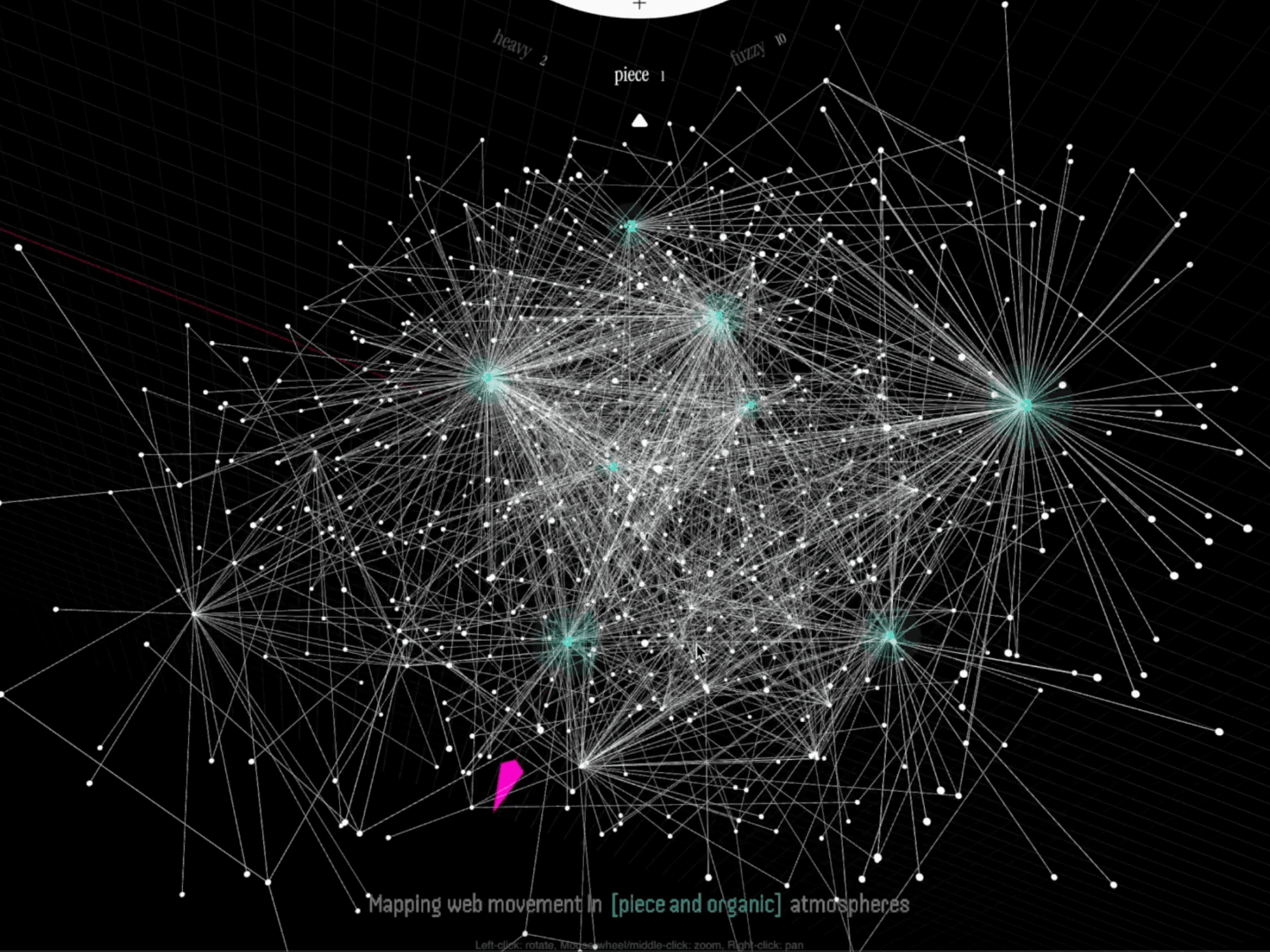

Technical Lead for 3D force-directed graph and ML pipeline to embed visual/textual ambience into spatial coordinates.

graphics & simulation

see all →

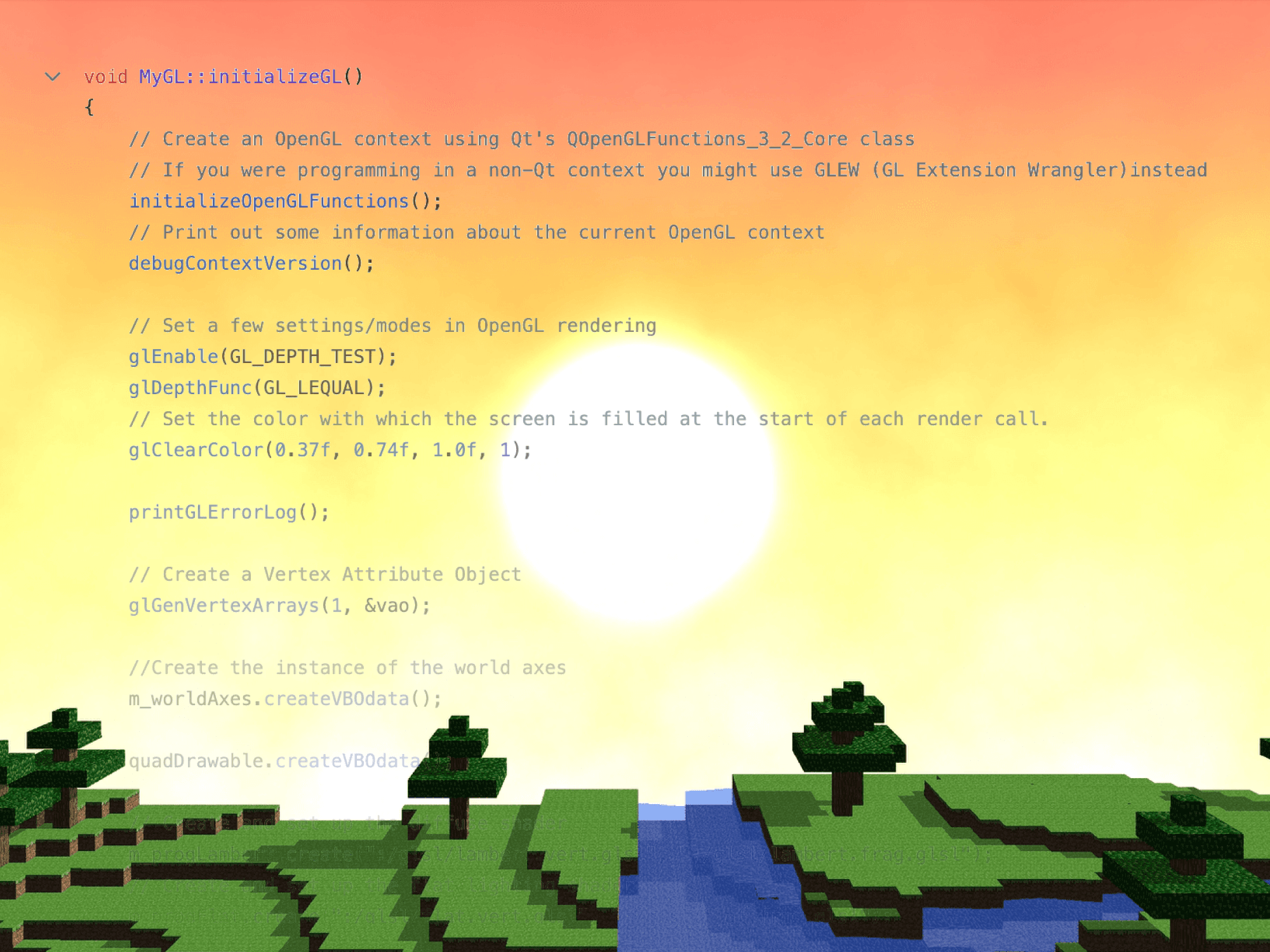

Built ground-up in C++/OpenGL with VBO-based terrain rendering, Perlin noise procedural generation, custom water shaders with reflections, multithreaded chunk loading.

Ping-pong buffer, minimal diffusion operations, and two-canvas optimization for real-time watercolor effects in GLSL.

creative tools

see all →

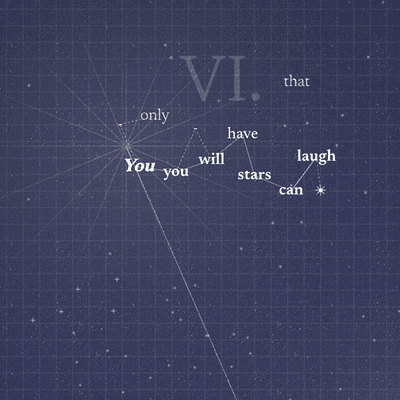

Canvas rendering with d3-force physics for organic word layouts. Custom packing algorithm positions paragraphs with reading-order bias.

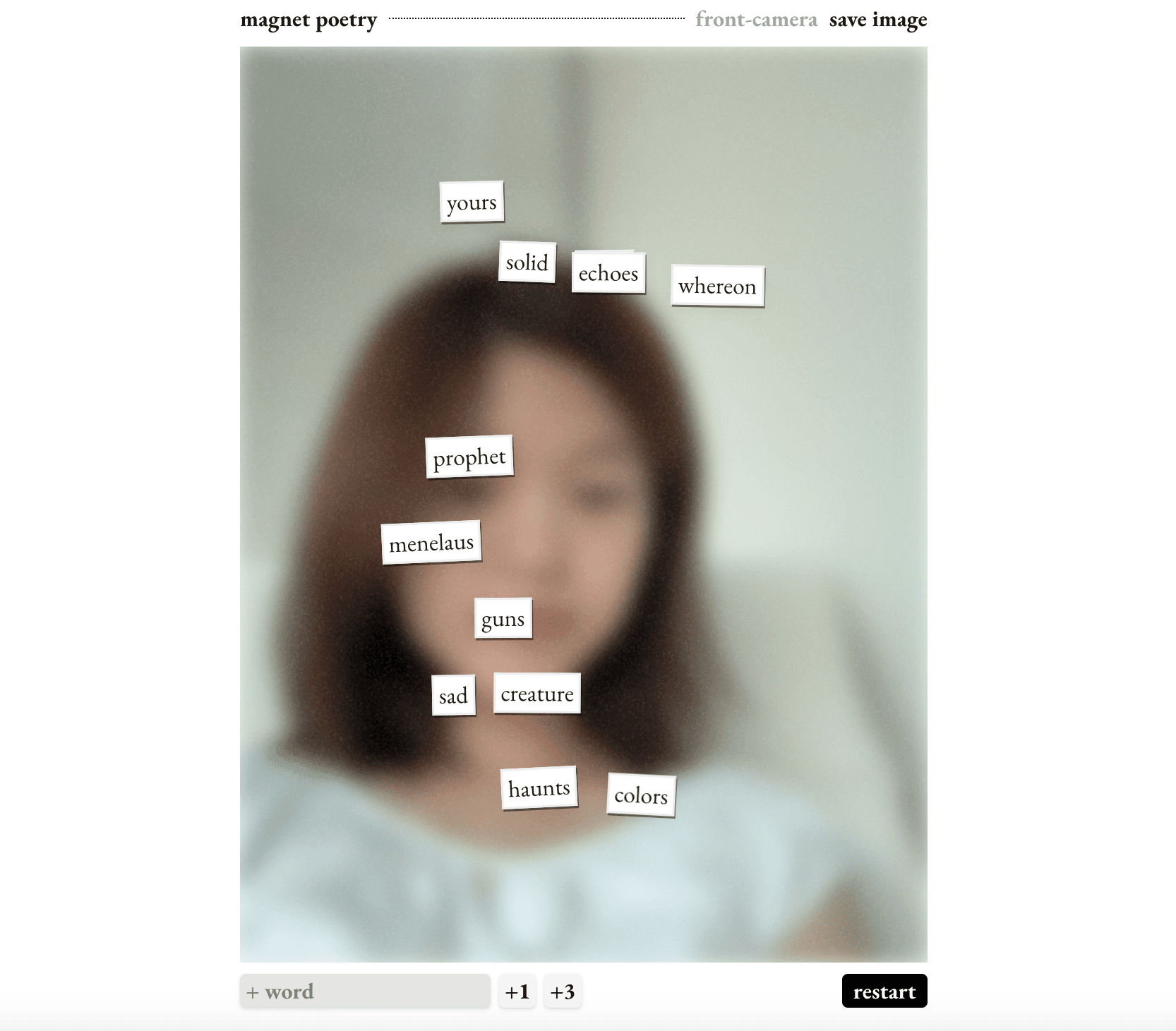

Tactile poetry board made by pre-processing a poetry dataset and implementing custom responsive drag-drop UI.